Spark And Machine Leanring

ABSTRACT

In today’s world there is a large amount data is created from various sources like Web Application, Social Media etc. These data can be analysed and can be used for training the machines. Machine learning is the technique for creating the Decision Making model and algorithm using the statistical techniques on data. For Processing Big Data in Machine Learning Spark plays a key role in efficiently executing the Algorithm. There are many efforts were done to improvise the Machine Learning technique using Spark. In this literature Review we will review some of the Techniques which help in improving the performance of Machine learning in Spark. And we will also compare the methodology and discuss the results.

INTRODUCTION

Big Data deals with a large volume of data and complex data. The processing of the large amount of data or Complex data require a huge amount of time. It plays a very important to fast process the continuously generated big data. These datasets are used for various studies using different technique like Machine Learning (ML) and Deep Learning (DL) [1]. Many studies are conducted on these data sets in different fields like Medical, training Robots, Self Driven Cars etc. They use images, videos and Sounds to train these machines. Therefore the ML got the huge attention in the area of Research and Computing for solving the real life problems.

In the Big Data framework Spark used for processing the Big Data and reach high performance using the ML and DL. Apache spark uses the Cluster System platform for processing the Big Data and it holds all the necessary high-level API like Java and Python. So the main Concern with the ML and DL is the processing time for the execution of the large datasets which is very large. So to reduce the time for execution Distributed Computing comes into picture. So in this paper we will be seeing at the performance of the Machine Learning using Spark with different environment and techniques and marking it with different Benchmarking tools.

There are different research is done to analyse and enhance the performance of the Machine Learning using Spark and dif- ferent approaches like HPC (High Performance Computing), YARN Cluster, Self tuning approach and Hibench.

Fig. 1. Architecture Of Spark

BACKGROUND

A. Architecture of Spark:

The Spark architecture consist of balck and white layers [2]. The Architecture consists of two main ideas i.e Resilient Dis- tributed Datasets(RDD) and Directed Acyclic Graph (DAG). RDD in Apche performs the two functions like Transformation and Actions. RDD is the main Component of Spark basically for Reliability and Scalability. Figure 1. shows the Architecture of the Spark framework which consist of the Master node (Driver) and the many Worker nodes (slave). The master node is responsible for managing all the slave nodes. For every application there will be one driver node and one Cluster node. The Cluster Manager manages the communication between the nodes.

TECHNIQUES FOR MACHINE LEARNING

In this section we will discuss the different techniques and approaches for performing the Machine Learning and will show the comparison between them:

Smart-MLlib Technique:

In this technique the researcher proposed a prototype called Smart-MLlib [3]. It allows the Machine Learning implementa- tions with similar API. To test the performance of new smart- MLlib the researcher used different Algorithm of Machine Learning. And found that the newly created smart-MLlib has outcompete by over 800%. The researcher used this Library on four different Algorithm like K-means, Linear regression, Gaussian Mixture Model and Support Vector Machines. In Smart-MLlib implementation described by the reduction ob- ject and API functions for the algorithm. Implementation of the Algorithm demands the reduction of the objects: Gen key(),Accumulate(), Merge(), postcombine().

Genkey(): It returns a constant number.

Accumulate(); Process the current and reduces the object. Merge(): Further reduce the map generated by the function

Accumulate.

Postcombine(): Finalised map utilize the accumulated value inside the function.

K-Means

Linear Regres-

sion

GMM

SVM

Input Size:

1GB, 2GB,

4GB , 16 GB

Input Size:

1GB, 2GB,

4GB , 16 GB

Input Size:

1GB, 2GB,

4GB , 16 GB

Input Size:

1GB, 2GB,

4GB , 16 GB

Iteration:

1000 Iteration

on 16

Dimension Input

Iteration:

15 Input

Dimension

and 1 Output Dimension 1000 Iteration

Iteration:100

Iteration using

4 Gaussian Model

Iteration:15

Dimension Input and

1 Output Dimension 1000 iterations.

Result: On

Averageing

the three input sizes Smart- Mllib K-means scales 220%

better than 4nodes and 32 nodes

Result: On

Averageing

the three input sizes Smart- Mllib K-means scales 220%

better than 4nodes and 32 nodes

Result:2 to

15 times advantage over Smart;GMM tests, this

shows high

efficiency 13

to 54 times advantage

Result: Im-

plementation scaled 200%

better than Spark’s between 4

nodes and 32

nodes

TABLE I

COMPARINFG PERFORMANCE OF DIFFERENT ML ALGORITHM

All the results that Smart-MLlib is amuch better then the MLlib. The overall performance grows to 800%. All the algorithm perform much better with Smart-MLlibs then the old algoritm MLlibs.

Yarn Cluster Using HiBench:

In this technique the researcher used the different approach to test the performance of the Big Data Analytics. They used HiBench which is a Benchmarking Tool and incorporated it with the large scale using a cluster environment [1]. The paper explain the Allocation of the memory in YARN to the computing Resources in Spark when jobs are executed to cluster. The Benchmarking of the Spark is done using multiple Machines by measuring the different factors like execution time, throughput, CPU usage etc. A Large Dataset is used to measure the performance of the Spark in fully distributed mode. For achieving the motive the researcher use the environment using Cluster which consist of 3 Worker Node and 1 master node. The processor for each node used is Intel Xenon with 20 cores, 128 GB RAM, 512 GB SSD and 1TB HDD, Hadoop version-2.7. The result obtained by the researcher found the increment 1.44 and 1.45 times in the throughput using two nodes when compared with one node. And 1.93 and 1.87 times when run with three node cluster. The execution time increases slightly with the increase in the size of data. Throughput is the amount of time spend in executing the process. Linear Regression shows the higher throughput comparing to other analytic Algorithm. The Research con- cluded that Spark performs well with large data and cluster size in terms of Cluster Size and throughput. the researcher also suggested the future work to run the multiple Machine

Learninga and Deep Learning Job with Spark using Multiple Machines.

Big Data Analysis using Yarn Cluster:

This technique is the further extension of the technique mentioned above. In this technique the researcher talks about the performance of the Apache Spark. And also use the evaluated the performance of the TensorFlowon Spark which is highly recommended for the Distributed Deep Learning [4]. They checks the performance by benchmarking in cluster by measuring the execution time, throughput, CPU Usage etc. And wants to check that pseudo distributed mode is more reliable than other. They used the same environment as in the case of above technique to perform the research. The researcher found that as the size of the data increases the there is slight increase in the execution time for large datasets. Only exception is with Sort aand Terasort the execution time increased very slightly. It is also been observed that with large number of worker the accuracy of 80% is achieved in less time. The Throughput value of the Linear regression is very high. As the size of the data increases the value of throughput is also increased because the amount of computing required for that source is sufficient to process all the workloads. The research shows that the TFoS And Spark shows a good performance in the fully distributed mode.

HPC Environment:

In this research the researcher used HPC environment for the purpose of Machine Learning [5]. For optimising the Deep Learning on Apache Spark the researcher performed changes in the following areas to overall improvise the performance.

Memory Optimisation: The researcher found that RDD is not sufficient for Machine Learning Algorithm. So the Researcher proposed the extension for RDD i.e MapRDD removes the need to compute and store entire dataset in memory. MapRDD Using the map transformation utilizes the implicit record level relation between parent and child datasets. In RDD the whole datasets loaded into the main memory and small sample is selected for the training process. But in MapRDD only thinly dispersed selected inputs are loaded into the main memory.

Communication Optimisation: The researcher proposed an adapted non-blocking all Reduce implementation. which run along with the executor process asynchronously and execute reduce operation on behalf of tasks. And in later stages the values can be retrieved from the Subsequent tasks.

The experiment shows an improvement of speed of 2.6x and 11.6x theoretically with K80 graphics card on 32-nodes cluster with significant rise in the compute ratio from 31-47% to 82-91%. and also noted a reduction of 80% in memory usage.

REFERENCES

[1]H. Ahn, H. Kim, and W. You, “Performance Study of Spark on YARN Cluster Using HiBench,” 2018 IEEE International Conference on Con- sumer Electronics – Asia, ICCE-Asia 2018, pp. 206–212, 2018.

[2]M. A. Rahman, J. Hossen, and C. Venkataseshaiah, “SMBSP: A Self- Tuning Approach using Machine Learning to Improve Performance of Spark in Big Data Processing,” Proceedings of the 2018 7th International Conference on Computer and Communication Engineering, ICCCE 2018, pp. 274–279, 2018.

[3]“Smart-MLlib: A high-performance machine-learning library,” Proceed- ings – IEEE International Conference on Cluster Computing, ICCC, pp. 336–345, 2016.

[4]H. Y. Ahn, H. Kim, and W. You, “Performance Study of Distributed Big Data Analysis in YARN Cluster,” 9th International Conference on Infor- mation and Communication Technology Convergence: ICT Convergence Powered by Smart Intelligence, ICTC 2018, pp. 1261–1266, 2018.

[5]Z. Li, J. Davis, and S. A. Jarvis, “Optimizing Machine Learning on Apache Spark in HPC Environments,” Proceedings of MLHPC 2018: Machine Learning in HPC Environments, Held in conjunction with SC 2018: The International Conference for High Performance Computing, Networking,StorageandAnalysis, pp. 95–105, 2019.

Essay Writing Service Features

Our Experience

No matter how complex your assignment is, we can find the right professional for your specific task. Contact Essay is an essay writing company that hires only the smartest minds to help you with your projects. Our expertise allows us to provide students with high-quality academic writing, editing & proofreading services.

Free Features

Free revision policy

$10Free bibliography & reference

$8Free title page

$8Free formatting

$8How Our Essay Writing Service Works

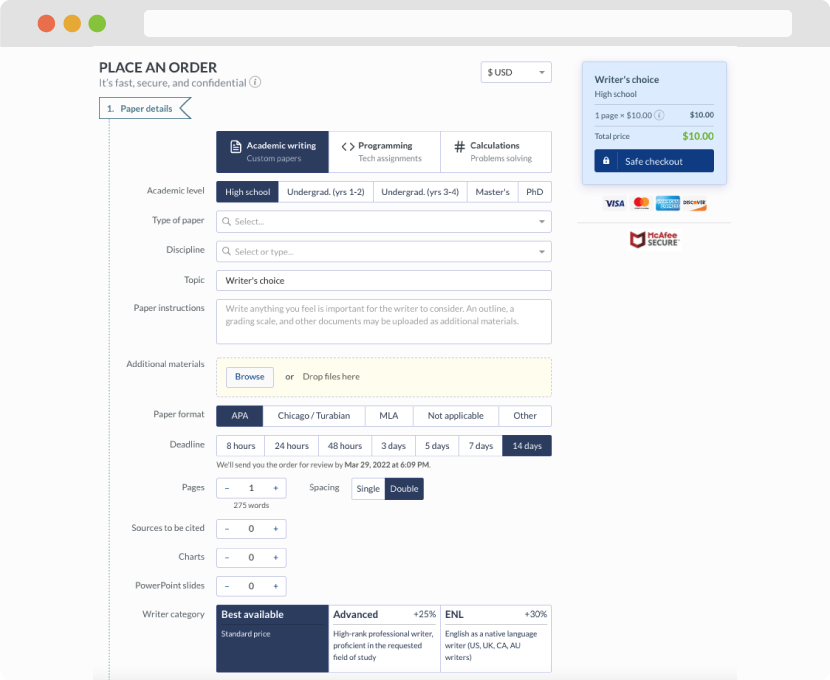

First, you will need to complete an order form. It's not difficult but, in case there is anything you find not to be clear, you may always call us so that we can guide you through it. On the order form, you will need to include some basic information concerning your order: subject, topic, number of pages, etc. We also encourage our clients to upload any relevant information or sources that will help.

Complete the order form

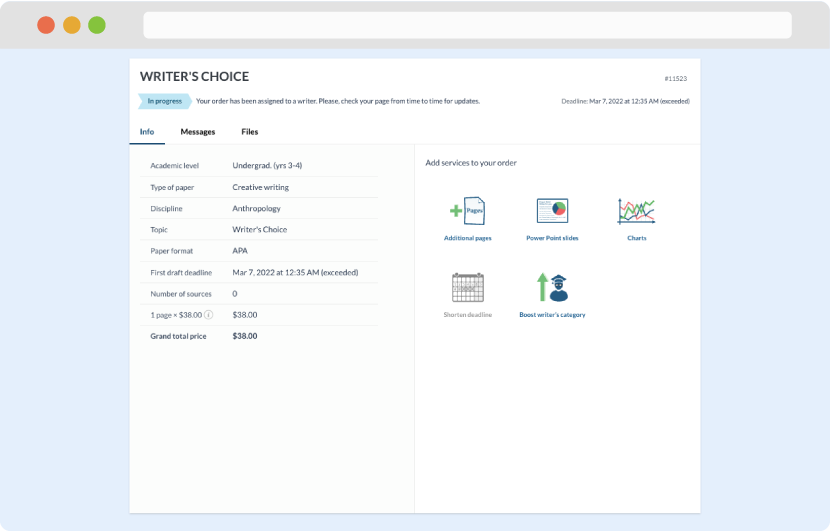

Once we have all the information and instructions that we need, we select the most suitable writer for your assignment. While everything seems to be clear, the writer, who has complete knowledge of the subject, may need clarification from you. It is at that point that you would receive a call or email from us.

Writer’s assignment

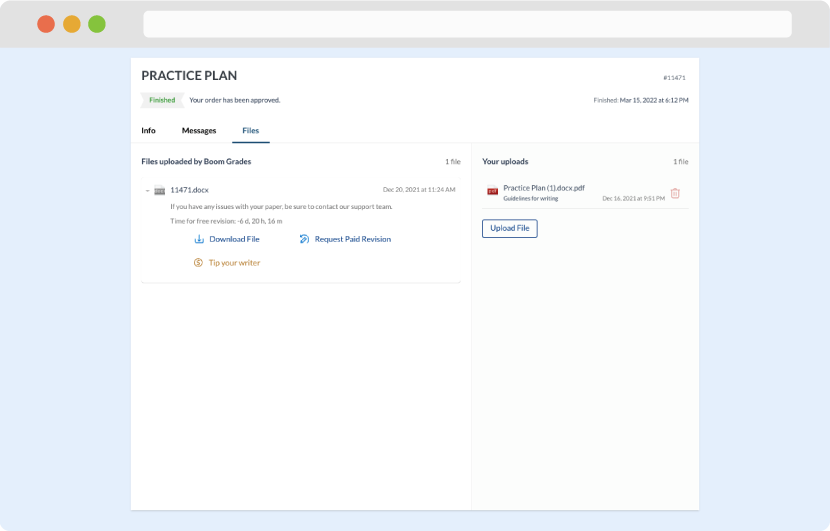

As soon as the writer has finished, it will be delivered both to the website and to your email address so that you will not miss it. If your deadline is close at hand, we will place a call to you to make sure that you receive the paper on time.

Completing the order and download